By ATS Staff - March 2nd, 2026

Artificial Intelligence Latest Technologies Software Development

Prompt engineering is the practice of designing structured inputs that guide large language models (LLMs) to produce more accurate, logical, and useful outputs. As models like OpenAI’s GPT-4 evolved, researchers discovered that how you ask a question often matters as much as what you ask.

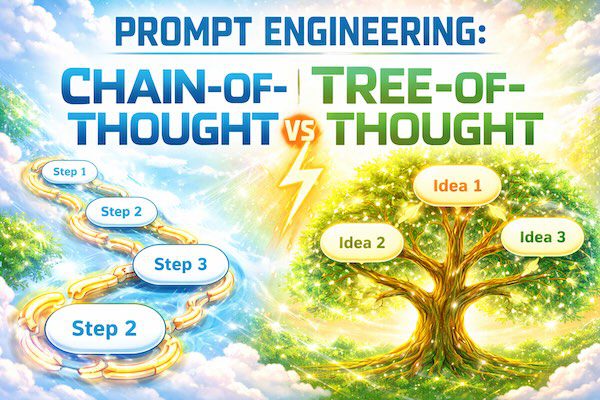

Among advanced prompting strategies, two reasoning-focused techniques stand out:

- Chain-of-Thought (CoT) Prompting

- Tree-of-Thought (ToT) Prompting

Both methods aim to improve reasoning performance, especially on complex tasks like math, planning, coding, and decision-making. However, they approach reasoning in fundamentally different ways.

What Is Chain-of-Thought (CoT) Prompting?

Chain-of-Thought prompting encourages the model to generate intermediate reasoning steps before producing a final answer.

Instead of asking:

What is 27 × 14?

You ask:

Solve step-by-step: What is 27 × 14?

The model then explains its reasoning process:

- 27 × 10 = 270

- 27 × 4 = 108

- 270 + 108 = 378

Final Answer: 378

Why It Works

Large language models are trained on massive amounts of text that include explanations and step-by-step reasoning. By explicitly prompting the model to “think step by step,” you activate patterns that resemble logical deduction.

Benefits of Chain-of-Thought

- Improves performance on arithmetic and logic tasks

- Increases transparency of reasoning

- Reduces careless errors

- Easy to implement (just add a reasoning instruction)

Limitations of Chain-of-Thought

- Reasoning is linear

- If an early step is wrong, the entire solution can collapse

- Doesn’t explore alternative solution paths

What Is Tree-of-Thought (ToT) Prompting?

Tree-of-Thought is a more advanced reasoning strategy inspired by decision trees and search algorithms.

Instead of following a single chain of reasoning, the model:

- Generates multiple possible reasoning paths

- Evaluates them

- Explores the most promising branches

- Selects the best final solution

This approach was formalized in research by Princeton University and Google DeepMind researchers.

How It Differs Conceptually

Chain-of-Thought = One path forward

Tree-of-Thought = Many branches explored

Think of it like:

- CoT = Walking straight down one road

- ToT = Standing at an intersection and exploring multiple routes before choosing the best one

Example Comparison

Problem:

A farmer has animals with a total of 20 heads and 56 legs. How many chickens and cows are there?

Chain-of-Thought Approach

The model might reason:

- Let chickens = x, cows = y

- x + y = 20

- 2x + 4y = 56

- Solve equations

- Result: 12 chickens and 8 cows

Single reasoning chain → final answer.

Tree-of-Thought Approach

The model may:

- Generate multiple hypotheses:

- Try more cows than chickens

- Try more chickens than cows

- Evaluate feasibility

- Prune incorrect branches

- Confirm the optimal solution

Multiple reasoning branches → evaluation → best result.

Structural Differences

| Feature | Chain-of-Thought | Tree-of-Thought |

|---|---|---|

| Reasoning style | Linear | Branching |

| Error recovery | Weak | Stronger |

| Exploration | Single path | Multiple paths |

| Complexity | Simple to implement | More complex |

| Best for | Math, logic, step problems | Planning, strategy, puzzles |

When to Use Chain-of-Thought

Use CoT when:

- The problem has a clear step-by-step solution

- The reasoning path is straightforward

- You want transparency

- Speed is important

Examples:

- Math calculations

- Coding logic

- Basic logical reasoning

When to Use Tree-of-Thought

Use ToT when:

- The problem requires exploration

- There are multiple possible solutions

- Strategy and evaluation are needed

- You want higher reliability

Examples:

- Game strategy

- Business decision analysis

- Complex planning

- Brainstorming multiple approaches

Implementation Differences in Practice

Simple CoT Prompt Template

Solve the problem step-by-step and explain your reasoning clearly before giving the final answer.

Simple ToT Prompt Template

Generate multiple possible reasoning paths. Evaluate each path. Prune weaker options. Select the best final answer. Explain your decision.

ToT often requires more structured control, sometimes through external orchestration code rather than a single prompt.

Performance Insights

Research shows:

- CoT significantly improves reasoning accuracy compared to direct answers.

- ToT can outperform CoT on complex search problems.

- ToT requires more computation and careful design.

In production systems, ToT is often implemented with controlled iterative prompting rather than a single instruction.

Strengths and Weaknesses Summary

Chain-of-Thought Strengths

- Simple

- Fast

- Transparent

- Highly effective for structured problems

Chain-of-Thought Weaknesses

- Fragile if reasoning goes wrong

- No alternative exploration

Tree-of-Thought Strengths

- More robust

- Better for complex reasoning

- Can self-correct via evaluation

Tree-of-Thought Weaknesses

- Slower

- Requires more tokens

- More complex to implement

The Bigger Picture

Both techniques reflect a broader truth in prompt engineering:

The quality of reasoning depends on how reasoning is structured.

Chain-of-Thought unlocked a major leap in LLM reasoning performance. Tree-of-Thought extends this by adding search and evaluation mechanisms.

As models continue to improve, hybrid approaches—combining linear reasoning with structured branching—are becoming more common in advanced AI systems.

Final Thoughts

Chain-of-Thought is like thinking carefully.

Tree-of-Thought is like thinking strategically.

If you're building AI applications—whether educational tools, planning systems, or coding assistants—understanding when to use each technique can significantly improve output quality.

Prompt engineering is not just about better prompts.

It’s about designing better thinking.

Popular Categories

Agile 2 Android 2 Artificial Intelligence 51 Backup Tools 2 Blockchain 2 Cloud Storage 5 Code Editors 2 Computer Languages 12 Cybersecurity 9 Data Science 17 Database 8 Digital Marketing 3 Ecommerce 3 Email Server 2 Finance 2 Google 6 HTML-CSS 2 Industries 6 Infrastructure 4 iOS 3 IoT 1 Javascript 6 Latest Technologies 45 Linux 10 LLMs 11 Machine Learning 32 Mobile 3 Msths & Stats 1 MySQL 3 Open source 1 Operating Systems 7 PHP 2 Project Management 3 Python Programming 28 SEO - AEO 5 Software Development 49 Software Testing 3 Web Server 7 Work Ethics 2